MySQL Group Replication: Difference between revisions

| Line 127: | Line 127: | ||

Repeat these steps for all of the remaining servers | Repeat these steps for all of the remaining servers | ||

* Creating the cluster: | * Creating the cluster: | ||

'''First DB Server:''' Connect to the first db server using the mysql shell from the lxc host server | |||

<pre> | <pre> | ||

kedar@infrabase1:~$ mysqlsh | kedar@infrabase1:~$ mysqlsh | ||

| Line 160: | Line 160: | ||

} | } | ||

</pre> | </pre> | ||

'''Second DB Server''': Connect to the second db server using the mysql shell from the lxc host server | |||

<pre> | <pre> | ||

MySQL|db1:3306 ssl|JS> cluster.addInstance('clustermgr@db2:3306'); | MySQL|db1:3306 ssl|JS> cluster.addInstance('clustermgr@db2:3306'); | ||

| Line 200: | Line 200: | ||

} | } | ||

</pre> | </pre> | ||

'''Third DB Server''': Connect to the third db server using the mysql shell from the lxc host server | |||

<pre> | <pre> | ||

MySQL|db1:3306 ssl|JS> cluster.addInstance('clustermgr@db3:3306'); | MySQL|db1:3306 ssl|JS> cluster.addInstance('clustermgr@db3:3306'); | ||

Revision as of 09:10, 9 June 2020

| LXC Host Details |

hostname: infrabase1 Network: 10.1.65.0/24 IP Address : 10.1.65.9 Subnet Mask: 255.255.255.0 Gateway: 10.1.65.1 DNS: 8.8.8.8 Reverse Proxy setup: Nginx Server OS: Ubuntu 20.04 Edition: LTS, server sudo user: kedar FTP Client: Filezilla ssh client: terminal, reminna Text editors: sublime-text User PC Details PC type: Desktop OS: Ubuntu Desktop IP Address: 10.1.65.173 sudo user:kedar LXC Containers - db1 (MySQL8.0) - db2 (MySQL8.0) - db3 (MySQL8.0) - db4 (MySQL8.0) - web1 (MySQL Router+Apache) |

Introduction

This article explains how to create a MySQL8.0 HA cluster using group replication and MySQL router. It is important to note that I am using an Ubuntu 20.04 LTS server edition as the LXC host OS and have created 4 Ubuntu 20.04 containers in it and installed MySQL 8.0 from the repositories. The containers have private IPs and they are not reachable on public network. A reverse proxy is setup on the LXC host server so that application setup on the containers are accessible on port 443 or port 80. It can be found here.

Basic preparation

Container details are mentioned below. Host entries have been made into respective servers so that the servers can reach every other server using a hostname instead of an IP.

| # | Container | Hostname | IP Address | Software installed |

|---|---|---|---|---|

| 1 | Container 1 | db1 | 10.0.3.202 | mysql server 8.0 |

| 2 | Container 2 | db2 | 10.0.3.246 | mysql server 8.0 |

| 3 | Container 3 | db3 | 10.0.3.175 | mysql server 8.0 |

| 4 | Container 4 | db4 | 10.0.3.26 | mysql server 8.0 |

| 5 | Container 5 | web1 | 10.0.3.159 | mysql-client, apache2, php7.4, wordpress, mysqlrouter |

Wordpress will be setup on web1 container and mysqlrouter will also be placed on the same application server. Wordpress will connect to the mysqlrouter like a local mysql db instance. Mysqlrouter will route it to the necessary mysql server in the cluster.

Preparing MySQL Servers

The below steps have to be done on all four servers without exception

- Install mysql server 8.0

root@db1:/# apt install mysql-server nano

- Run secure installation

root@db1:/# mysql_secure_installation

If you are doing a POC, answer 'N' for the first question. It asks if password complexity should be enabled. You can keep short passwords so that it is simpler to try out in a POC. For a production environment you should enable password complexity. Answer 'Y' for all further questions. This will disable remote 'root login' on the server and also remove anonymous users and test databases from the MySql server.

- Create user for cluster management

Login to sql server and create a user and grant all permissions. Since this is a POC, you can be a bit liberal with the permissions, otherwise checkout the documentation on permissions for group replication user on MySQL website.

root@db1:/# mysql -u root -p mysql> CREATE USER 'clustermgr'@'%' IDENTIFIED BY '123'; mysql> GRANT ALL PRIVILEGES ON *.* TO 'clustermgr'@'%'WITH GRANT OPTION; mysql> FLUSH PRIVILEGES; mysql> EXIT;

- Allow remote database connection

root@db1:/# nano /etc/mysql/mysql.conf.d/mysqld.cnf

Comment the bind address directive so that this database can be connected from other sources.

. #bind-address = 127.0.0.1 .

- Repeat the above steps in all the four servers, exactly in the same manner. Do not change password of the user or the username. In POC keep it same otherwise they can be different.

Setting up MySQL Shell

We shall be setting up the mysql shell on the lXC host server (10.1.65.9) from where the lxc containers running the mysql servers are reachable. Group replication will be setup using the mysql-shell program. Group replication can also be setup without the mysql-shell, however serveral modifications are required in the configuration files. Mysql-shell automates the configuration and helps in managing the cluster as well.

It is important to note that Ubuntu 20.04 does not have a mysql-shell available in the default apt repository for Ubuntu 20.04. However, it is available as a snap. If you install mysql-shell snap, then javascript is not enabled by default for the mysql-shell snap. For setting up the cluster mysql-shell needs javascript. We therefore download the mysql-shell deb file from mysql website and manually install it on LXC host server.

kedar@infrabase1:~$ wget https://dev.mysql.com/get/Downloads/MySQL-Shell/mysql-shell_8.0.20-1ubuntu20.04_amd64.deb kedar@infrabase1:~$ sudo dpkg -i mysql-shell_8.0.20-1ubuntu20.04_amd64.deb

Once it is installed, you should be able to connect to the db servers using 'clustermgr' credentials. Use the following command

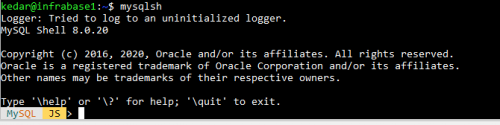

kedar@infrabase1:~$ mysqlsh

It should look like below

Setting the cluster

- Log into the first db1 through the shell.

kedar@infrabase1:~$ mysqlsh

At the shell enter the following

MySQL-js> dba.checkInstanceConfiguration('clustermgr@db1:3306')

MySQL-js> dba.configureLocalInstance('clustermgr@db1:3306')

By pressing ^D, you can exit the mysql-shell

Ssh into the mysql server and restart the server

root@db1:/# systemctl restart mysql

Repeat these steps for all of the remaining servers

- Creating the cluster:

First DB Server: Connect to the first db server using the mysql shell from the lxc host server

kedar@infrabase1:~$ mysqlsh

MySQL-js> shell.connect('clustermgr@db1:3306')

MySQL| db1:3306 ssl -js> cluster = dba.createCluster('myCluster');

MySQL| db1:3306 ssl -js> cluster.status()

It will appear as below

{

"clusterName": "myCluster",

"defaultReplicaSet": {

"name": "default",

"primary": "db1:3306",

"ssl": "REQUIRED",

"status": "OK_NO_TOLERANCE",

"statusText": "Cluster is NOT tolerant to any failures.",

"topology": {

"db1:3306": {

"address": "db1:3306",

"mode": "R/W",

"readReplicas": {},

"replicationLag": null,

"role": "HA",

"status": "ONLINE",

"version": "8.0.20"

}

},

"topologyMode": "Single-Primary"

},

"groupInformationSourceMember": "db1:3306"

}

Second DB Server: Connect to the second db server using the mysql shell from the lxc host server

MySQL|db1:3306 ssl|JS> cluster.addInstance('clustermgr@db2:3306');

MySQL| db1:3306 ssl -js> cluster.status()

The cluster status will appear as below

{

"clusterName": "myCluster",

"defaultReplicaSet": {

"name": "default",

"primary": "db1:3306",

"ssl": "REQUIRED",

"status": "OK_NO_TOLERANCE",

"statusText": "Cluster is NOT tolerant to any failures.",

"topology": {

"db1:3306": {

"address": "db1:3306",

"mode": "R/W",

"readReplicas": {},

"replicationLag": null,

"role": "HA",

"status": "ONLINE",

"version": "8.0.20"

},

"db2:3306": {

"address": "db2:3306",

"mode": "R/O",

"readReplicas": {},

"replicationLag": null,

"role": "HA",

"status": "ONLINE",

"version": "8.0.20"

}

},

"topologyMode": "Single-Primary"

},

"groupInformationSourceMember": "db1:3306"

}

Third DB Server: Connect to the third db server using the mysql shell from the lxc host server

MySQL|db1:3306 ssl|JS> cluster.addInstance('clustermgr@db3:3306');

MySQL| db1:3306 ssl -js> cluster.status()

The cluster status will appear as below

{

"clusterName": "myCluster",

"defaultReplicaSet": {

"name": "default",

"primary": "db1:3306",

"ssl": "REQUIRED",

"status": "OK",

"statusText": "Cluster is ONLINE and can tolerate up to ONE failure.",

"topology": {

"db1:3306": {

"address": "db1:3306",

"mode": "R/W",

"readReplicas": {},

"replicationLag": null,

"role": "HA",

"status": "ONLINE",

"version": "8.0.20"

},

"db2:3306": {

"address": "db2:3306",

"mode": "R/O",

"readReplicas": {},

"replicationLag": null,

"role": "HA",

"status": "ONLINE",

"version": "8.0.20"

},

"db3:3306": {

"address": "db3:3306",

"mode": "R/O",

"readReplicas": {},

"replicationLag": null,

"role": "HA",

"status": "ONLINE",

"version": "8.0.20"

}

},

"topologyMode": "Single-Primary"

},

"groupInformationSourceMember": "db1:3306"

}

All three servers are added in the cluster. You can add the fourth one too in the same manner. At any given point in time, only server will be primary and the remaining would be in read-only mode. In case of a failure, one of the two servers will become primary and will be in read/write mode and the other one will be in a read-only mode.